Honest answer upfront: it's getting harder. In 2022, AI music had obvious tells. In 2026, the best AI-generated tracks fool trained listeners on first listen, and even specialized detection tools produce false positives and false negatives at meaningful rates.

That doesn't mean you can't develop a useful sense for it. It means you should approach detection as an informed judgment rather than a checklist. This guide covers the audio characteristics that still reveal AI generation, the metadata signals that are often more reliable than your ears, the detection tools worth knowing, and—critically—what all of this can and can't tell you.

Part 1: Why This Is Getting Harder

Early AI music generators (Mubert, early Suno, Boomy) left obvious fingerprints: robotic vocals, looping patterns, muddy mixing, and a general sense of being assembled rather than played. Current models—Suno v4, Udio, and others—produce tracks that clear these bars easily. Genre diversity, chord progressions, mixing quality, and even emotional arc have improved dramatically.

The detection challenge is real. A 2024 study from researchers at the University of Amsterdam found that human listeners correctly identified AI-generated music only slightly better than chance when the audio quality was controlled. Detection tools perform better, but they're also trained on older model outputs and struggle with newer systems.

This context matters because overconfidence in detection leads to false accusations against real artists, which has real professional consequences. The goal is informed skepticism, not certainty.

Part 2: Audio Characteristics That Still Signal AI

Despite improving quality, certain patterns remain more common in AI-generated music than in human-produced recordings. None of these are definitive alone—they're signals that warrant closer attention.

Unnatural dynamics and transitions

Human music breathes. Volume shifts, builds, and drops happen in response to emotional intention. AI models sometimes produce sudden dynamic jumps that feel mechanical—not the kind of intentional contrast a producer makes, but an abrupt stitching between sections. Pay attention to transitions between verse, chorus, and bridge. Do they feel continuous, or do they feel like two separate clips placed together?

Repetitive structure without development

AI systems tend toward templates. You'll hear a motif introduced and then repeated with minimal variation—no gradual intensification, no unexpected turn, no moment where the music seems to respond to itself. Human compositions, even simple pop songs, usually have some sense of internal conversation. The chorus at the end feels different from the first chorus because something has been earned. AI tracks often don't have this.

Harmonic predictability

The I-V-vi-IV progression (C-G-Am-F in C major, or its equivalents) is everywhere in AI music because it's what the training data rewards. More tellingly, AI tracks rarely modulate to unexpected keys, use chromatic passing chords, or introduce harmonic tension that gets deliberately resolved. The harmony tends to stay safe throughout.

Vocal tells

This is the area where AI still struggles most consistently:

- Breathing patterns are either absent or placed at odd intervals. Human singers breathe in response to phrase length and emotional intensity. AI vocals often have no audible breath, or place it mid-phrase where a human never would.

- Pitch correction artifacts — Human vocals have micro-pitch variations that feel natural. AI vocals often display pitch-perfect delivery that, paradoxically, sounds slightly inhuman. The pitch is right but the expressiveness isn't there.

- Consonant and vowel shaping — Listen closely to how words end and begin. AI voices sometimes blur consonants together, clip the ends of words, or produce vowels that shift slightly in timbre mid-sustain. These are artifacts of audio synthesis rather than intentional stylistic choices.

- Emotional consistency — A human singer builds tension, backs off, pushes harder. AI vocals tend toward consistent intensity that doesn't respond to the lyric's meaning. The voice may be technically impressive but emotionally monotone.

Spectral analysis for skeptical listeners

If you have access to a free audio editor like Audacity, you can view a track's spectrogram (Analyze → Plot Spectrum, or switch to Spectrogram view). AI-generated audio sometimes shows unnaturally smooth, uniform frequency bands—lacking the slightly irregular texture of real acoustic instruments or room recordings. You may also see repetitive shapes or "blocky" patterns that indicate sections were synthesized in repeated chunks.

This is more reliable for identifying older AI models. Newer systems have improved spectral naturalness significantly.

Part 3: Metadata and Context: Often More Reliable Than Your Ears

For many tracks, the metadata story is easier to read than the audio itself.

Artist profile depth

A real working musician has a history: past releases, social media that predates their current work, interviews, live performance records, or at minimum a consistent presence that developed over time. AI-generated content farms often create artist profiles that appeared within the last few months, have generic bios, and show no activity outside of music uploads.

Check when the profile was created. Check whether the artist appears anywhere outside the streaming platform. A Google search for the artist name plus "interview" or "show" or "review" often quickly reveals whether a person exists behind the name.

Release volume

Human artists release music in cycles dictated by creative process, label schedules, promotion timelines, and life. An account that has published 200 tracks in four months is almost certainly automated. This doesn't mean every track is AI-generated—some accounts use AI to assist human composition—but it's a strong signal worth investigating.

Credit information

Professional releases list producers, mix engineers, mastering engineers, session musicians, and songwriters. This information appears on streaming platforms, in liner notes, and on PRO databases like ASCAP, BMI, or PRS. Missing or vague credits—"produced by [artist]" with no additional information—don't prove AI generation, but they remove one layer of verification.

Album artwork

AI-generated content operations frequently use AI-generated artwork. Reverse image search the cover art on Google Images or TinEye. If the same image appears in multiple contexts, or if the image shows the characteristic artifacts of AI image generation (inconsistent text, impossible geometry, blurred faces), that's a relevant signal.

If you're curious about how AI music actually gets created, it's worth trying an AI music generator yourself. Platforms like MusicCreator allow users to generate original songs, instrumentals, and background music within minutes using AI. Exploring how these tools work can make it easier to recognize the patterns and characteristics often found in AI-generated tracks. You can try it here: MesicCreator Ai

Part 4: Detection Tools: What They Can and Can't Do

Several tools now offer AI audio detection. Here's an honest assessment of what's available.

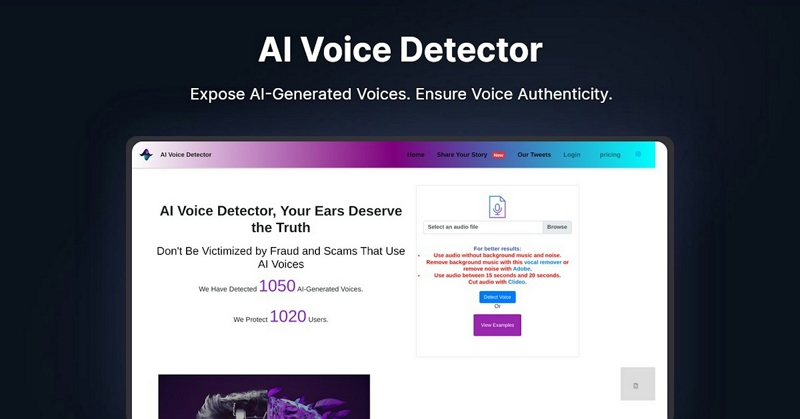

AI Voice Detector (aivoicedetector.com)

A free, browser-based tool that analyzes uploaded audio for synthetic voice patterns. Accessible and easy to use. Works best on isolated vocal tracks; mixed music with multiple elements reduces accuracy. Good for a quick first check, but treat results as one data point rather than a verdict.

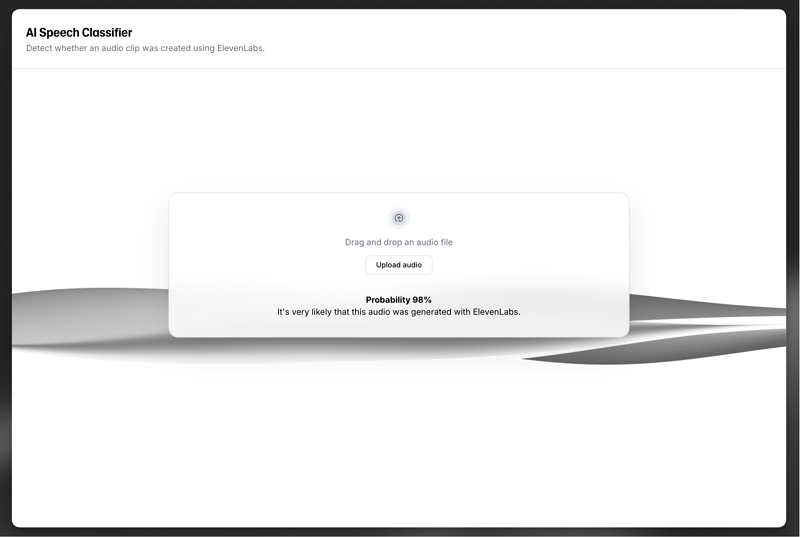

ElevenLabs AI Speech Classifier

Developed by one of the major AI voice generation companies, which gives it some credibility in understanding what their own outputs look like. Designed primarily for speech detection rather than music. Most reliable for podcasts, voice notes, and narration rather than produced tracks with instrumentation.

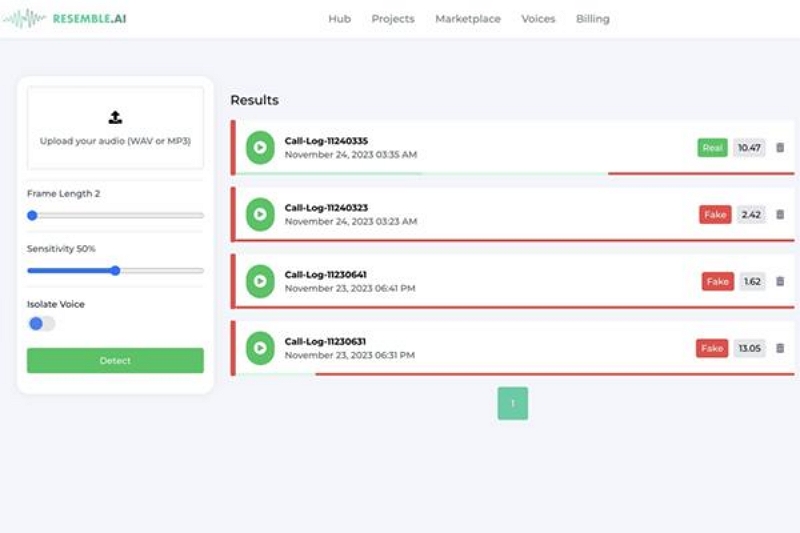

Resemble AI Detect

Enterprise-grade tool designed for deepfake audio detection. More sophisticated than consumer tools and built for high-stakes verification contexts. Not designed for casual consumer use, but worth knowing if you're working in a professional or legal context where audio authentication matters.

Pindrop

Primarily used in call center security and fraud prevention rather than music detection. Overkill for most music-related questions, but relevant if you're working on authentication in a professional context.

Manual analysis with Audacity

Free, open-source, and more revealing than most people expect. The spectrogram view lets you visually inspect frequency patterns. It requires some learning curve but doesn't have the false confidence problem that AI detection tools carry.

The honest limitation of all these tools

Every detection tool currently available was trained on AI outputs from earlier model generations. As AI music improves, detection accuracy decreases. Current tools are reasonably reliable for identifying outputs from models like early Suno, Boomy, and first-generation vocal clones. They're less reliable for current top-tier AI music systems.

More importantly, none of these tools can reliably handle the increasingly common middle ground: music where a human wrote the composition and lyrics, used AI for some production elements, and performed some parts themselves. The "was this AI-generated?" question often doesn't have a clean yes/no answer for contemporary music.

Part 5: The Harder Question: Human-AI Collaboration

The binary framing—AI-generated vs. human-made—misses most of what's actually happening in music production today. Many professional producers use AI tools for:

- Stem separation and audio cleanup

- Generating reference tracks or demos

- Filling in instrumentation gaps

- Vocal tuning and processing that approaches AI synthesis

A track where a human wrote the song, recorded vocals, and used AI to generate the backing instruments occupies genuinely ambiguous territory. Detection tools may flag it as AI-generated; human listeners may find it authentic; the artist may consider it their work. There's no consensus on where the line should be.

This matters for how you interpret detection results. A positive result from a detection tool means "this shows characteristics common in AI-generated audio"—not "a human didn't make this." The distinction has real implications for how you act on the information.

Part 6: Practical Approach for Different Situations

If you're a casual listener trying to understand what you're hearing: the metadata investigation (artist history, release volume, credit information) is usually faster and more reliable than audio analysis. Most AI content farms leave obvious traces in the non-audio data.

If you're a creator concerned about whether your work might be mistakenly flagged: make your creative process visible. Document your sessions, maintain a consistent public presence, ensure credits are complete, and consider watermarking your audio with tools like those offered by Dolby or AudioShake.

If you're evaluating music for professional or legal purposes: don't rely on consumer detection tools alone. Combine spectral analysis, metadata investigation, provenance research, and if stakes are high, consultation with an audio forensics professional. Detection tools produce false positives and false negatives at rates that matter in high-stakes contexts.

If you're a platform or curator: consider disclosure requirements rather than detection-based exclusion. Asking artists to self-disclose AI involvement is more scalable and more accurate than attempting to detect it after the fact.

Part 7: Frequently Asked Questions

Can AI music detection tools be trusted? As a first screen, yes. As a definitive verdict, no. Current tools have meaningful false positive and false negative rates, especially for newer AI models and human-AI hybrid productions.

Is AI-generated music always lower quality than human-made music? No longer reliably true. Top-tier AI music systems now produce work that's technically indistinguishable to most listeners. Quality is not a reliable indicator of AI involvement.

Is it illegal to release AI-generated music without disclosure? Copyright and disclosure rules vary by country and platform. As of 2025, most major streaming platforms require disclosure of AI-generated content, but enforcement is inconsistent. Some jurisdictions are developing more formal requirements.

Does AI-generated music have copyright protection? In most jurisdictions, fully AI-generated music without meaningful human creative input does not qualify for copyright protection. Human-AI hybrid work occupies more complex legal territory that's still being worked out by courts and legislatures.

Can AI music ever be emotionally genuine? This is more philosophical than technical. AI systems can produce music that evokes genuine emotional responses in listeners. Whether that constitutes "emotional genuineness" in the music itself is a question people disagree about significantly.

Conclusion - The Future of Music Authentication

AI generated music is advancing fast, blurring the line between human creativity and machine made sound. As models grow more sophisticated, the challenge of identifying synthetic tracks will only increase, pushing listeners, creators, and platforms to adopt stronger verification tools and clearer transparency standards. The future of music authentication will likely blend technology, regulation, and community awareness—ensuring that artistry, credit, and trust remain at the center of how we experience music.